View All 21 Categories - Our Mission - Path Towards AI Research Agent - Available AI Research Engineering Skills - Skill Structure - Repository Structure - Use Cases - Contributing - Community We provide the layer of Engineering Ability that enable your coding agent to write and conduct AI research experiments, including preparing datasets, executing training pipelines, deploying models, and build

Add this skill

npx mdskills install Orchestra-Research/ai-research-skillsComprehensive collection of 85 expert-level AI research skills across 21 categories with production-ready workflows

Skills LibraryThe most comprehensive open-source library of AI research engineering skills for AI agents

View All 21 Categories

| Model Architecture (5) | Fine-Tuning (4) | Post-Training (8) |

| Distributed Training (6) | Optimization (6) | Inference (4) |

| Tokenization (2) | Data Processing (2) | Evaluation (3) |

| Safety & Alignment (4) | Agents (4) | RAG (5) |

| Multimodal (7) | Prompt Engineering (4) | MLOps (3) |

| Observability (2) | Infrastructure (3) | Mech Interp (4) |

| Emerging Techniques (6) | ML Paper Writing (1) | Ideation (2) |

We provide the layer of Engineering Ability that enable your coding agent to write and conduct AI research experiments, including preparing datasets, executing training pipelines, deploying models, and building your AI agents.

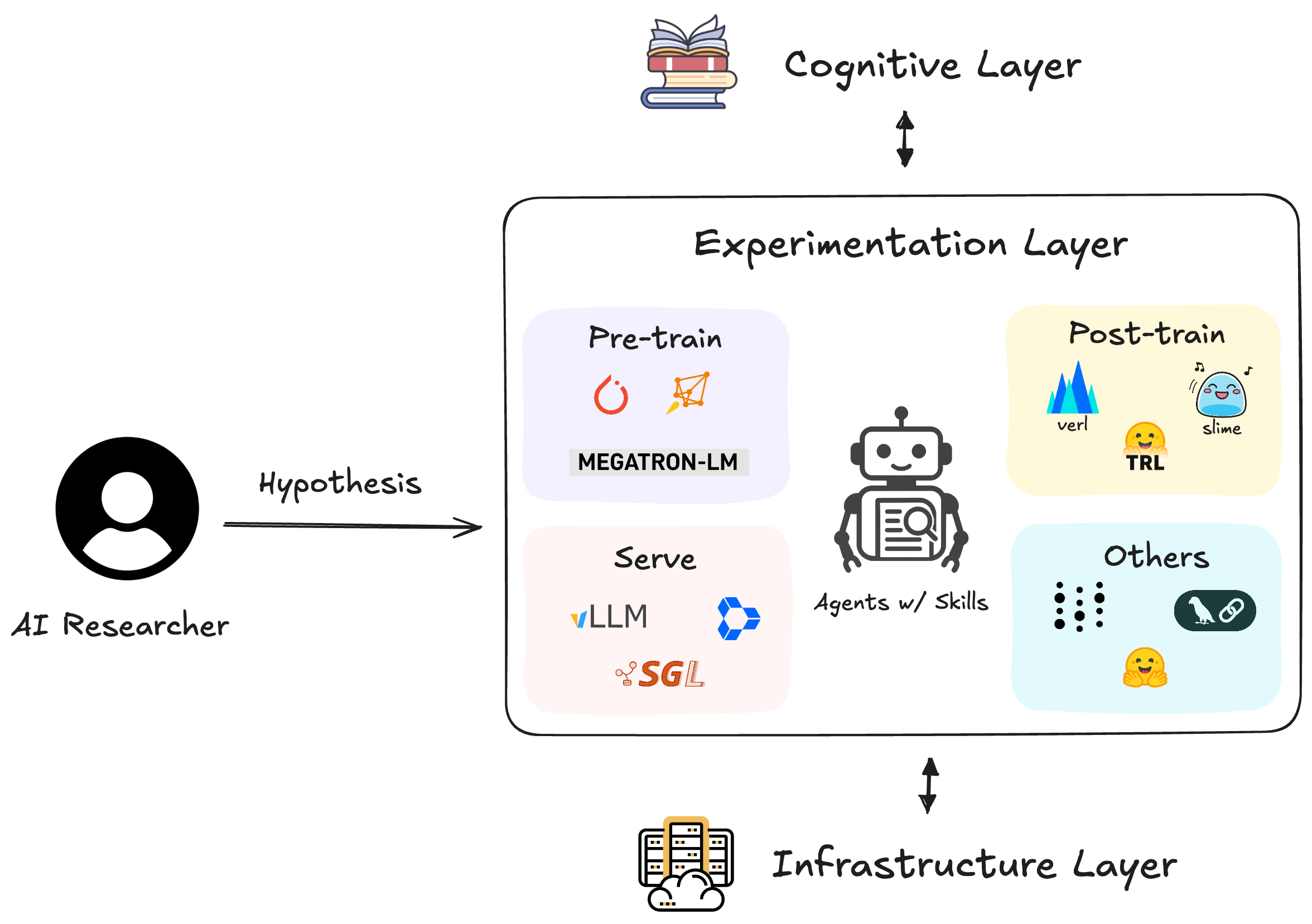

System diagram of an AI research agent

Modern AI research requires mastering dozens of specialized tools and frameworks. AI Researchers spend more time debugging infrastructure than testing hypotheses—slowing the pace of scientific discovery. We provide a comprehensive library of expert-level research engineering skills that enable AI agents to autonomously implement and execute different stages of AI research experiments—from data preparation and model training to evaluation and deployment.

Quality over quantity: Each skill provides comprehensive, expert-level guidance with real code examples, troubleshooting guides, and production-ready workflows.

Install skills to any coding agent (Claude Code, OpenCode, Cursor, Codex, Gemini CLI, Qwen Code) with one command:

npx @orchestra-research/ai-research-skills

This launches an interactive installer that:

~/.orchestra/skills/ with symlinks to each agentCLI Commands

# Interactive installer (recommended)

npx @orchestra-research/ai-research-skills

# Direct commands

npx @orchestra-research/ai-research-skills list # View installed skills

npx @orchestra-research/ai-research-skills update # Update installed skills

Claude Code Marketplace (Alternative)

Install skill categories directly using the Claude Code CLI:

# Add the marketplace

/plugin marketplace add orchestra-research/AI-research-SKILLs

# Install by category (21 categories available)

/plugin install fine-tuning@ai-research-skills # Axolotl, LLaMA-Factory, PEFT, Unsloth

/plugin install post-training@ai-research-skills # TRL, GRPO, OpenRLHF, SimPO, verl, slime, miles, torchforge

/plugin install inference-serving@ai-research-skills # vLLM, TensorRT-LLM, llama.cpp, SGLang

/plugin install distributed-training@ai-research-skills

/plugin install optimization@ai-research-skills

| Category | Skills | Included |

|---|---|---|

| Model Architecture | 5 | LitGPT, Mamba, NanoGPT, RWKV, TorchTitan |

| Tokenization | 2 | HuggingFace Tokenizers, SentencePiece |

| Fine-Tuning | 4 | Axolotl, LLaMA-Factory, PEFT, Unsloth |

| Mech Interp | 4 | TransformerLens, SAELens, pyvene, nnsight |

| Data Processing | 2 | NeMo Curator, Ray Data |

| Post-Training | 8 | TRL, GRPO, OpenRLHF, SimPO, verl, slime, miles, torchforge |

| Safety | 4 | Constitutional AI, LlamaGuard, NeMo Guardrails, Prompt Guard |

| Distributed | 6 | DeepSpeed, FSDP, Accelerate, Megatron-Core, Lightning, Ray Train |

| Infrastructure | 3 | Modal, Lambda Labs, SkyPilot |

| Optimization | 6 | Flash Attention, bitsandbytes, GPTQ, AWQ, HQQ, GGUF |

| Evaluation | 3 | lm-eval-harness, BigCode, NeMo Evaluator |

| Inference | 4 | vLLM, TensorRT-LLM, llama.cpp, SGLang |

| MLOps | 3 | W&B, MLflow, TensorBoard |

| Agents | 4 | LangChain, LlamaIndex, CrewAI, AutoGPT |

| RAG | 5 | Chroma, FAISS, Pinecone, Qdrant, Sentence Transformers |

| Prompt Eng | 4 | DSPy, Instructor, Guidance, Outlines |

| Observability | 2 | LangSmith, Phoenix |

| Multimodal | 7 | CLIP, Whisper, LLaVA, BLIP-2, SAM, Stable Diffusion, AudioCraft |

| Emerging | 6 | MoE, Model Merging, Long Context, Speculative Decoding, Distillation, Pruning |

| ML Paper Writing | 1 | ML Paper Writing (LaTeX templates, citation verification) |

| Ideation | 2 | Research Brainstorming, Creative Thinking |

View All 85 Skills in Details

All 85 skills in this repo are automatically synced to Orchestra Research, where you can add them to your projects with one click and use them with AI research agents.

See skills in action → demos/

We maintain a curated collection of demo repositories showing how to use skills for real AI research tasks:

| Demo | Skills Used | What It Does |

|---|---|---|

| NeMo Eval: GPQA Benchmark | NeMo Evaluator | Compare Llama 8B/70B/405B on graduate-level science questions |

| LoRA Without Regret Reproduction | GRPO, TRL | Reproduce SFT + GRPO RL experiments via prompting |

| ML Paper Writing (coming soon) | ML Paper Writing | Transform research repo → publication-ready paper |

| Layer-Wise Quantization Experiment | llama.cpp, GGUF | Investigate optimal layer precision allocation—early layers at Q8 achieve 1.9× compression with 1.3% perplexity loss |

| Cross-Lingual Alignment Analysis | FAISS | Quantify how well multilingual embeddings align semantic concepts across 8 languages using FAISS similarity search |

Featured Demo: Reproduce Thinking Machines Lab's "LoRA Without Regret" paper by simply prompting an AI agent. The agent autonomously writes training code for both SFT and GRPO reinforcement learning, provisions H100 GPUs, runs LoRA rank ablation experiments overnight, and generates publication-ready analysis. No manual coding required—just describe what you want to reproduce. (Blog | Video)

Each skill follows a battle-tested format for maximum usefulness:

skill-name/

├── SKILL.md # Quick reference (50-150 lines)

│ ├── Metadata (name, description, version)

│ ├── When to use this skill

│ ├── Quick patterns & examples

│ └── Links to references

│

├── references/ # Deep documentation (300KB+)

│ ├── README.md # From GitHub/official docs

│ ├── api.md # API reference

│ ├── tutorials.md # Step-by-step guides

│ ├── issues.md # Real GitHub issues & solutions

│ ├── releases.md # Version history & breaking changes

│ └── file_structure.md # Codebase navigation

│

├── scripts/ # Helper scripts (optional)

└── assets/ # Templates & examples (optional)

Quality Standards

We're building towards 80 comprehensive skills across the full AI research lifecycle. See our detailed roadmap for the complete development plan.

View Detailed Statistics

| Metric | Current | Target |

|---|---|---|

| Skills | 85 (high-quality, standardized YAML) | 80 ✅ |

| Avg Lines/Skill | 420 lines (focused + progressive disclosure) | 200-600 lines |

| Documentation | ~130,000 lines total (SKILL.md + references) | 100,000+ lines |

| Gold Standard Skills | 65 with comprehensive references | 50+ |

| Contributors | 1 | 100+ |

| Coverage | Architecture, Tokenization, Fine-Tuning, Mechanistic Interpretability, Data Processing, Post-Training, Safety, Distributed, Optimization, Evaluation, Infrastructure, Inference, Agents, RAG, Multimodal, Prompt Engineering, MLOps, Observability, ML Paper Writing, Ideation | Full Lifecycle ✅ |

Recent Progress: npm package @orchestra-research/ai-research-skills for one-command installation across all coding agents

Philosophy: Quality > Quantity. Following Anthropic official best practices - each skill provides 200-500 lines of focused, actionable guidance with progressive disclosure.

claude-ai-research-skills/

├── README.md ← You are here

├── CONTRIBUTING.md ← Contribution guide

├── demos/ ← Curated demo gallery (links to demo repos)

├── docs/

├── 01-model-architecture/ (5 skills ✓ - LitGPT, Mamba, RWKV, NanoGPT, TorchTitan)

├── 02-tokenization/ (2 skills ✓ - HuggingFace Tokenizers, SentencePiece)

├── 03-fine-tuning/ (4 skills ✓ - Axolotl, LLaMA-Factory, Unsloth, PEFT)

├── 04-mechanistic-interpretability/ (4 skills ✓ - TransformerLens, SAELens, pyvene, nnsight)

├── 05-data-processing/ (2 skills ✓ - Ray Data, NeMo Curator)

├── 06-post-training/ (8 skills ✓ - TRL, GRPO, OpenRLHF, SimPO, verl, slime, miles, torchforge)

├── 07-safety-alignment/ (4 skills ✓ - Constitutional AI, LlamaGuard, NeMo Guardrails, Prompt Guard)

├── 08-distributed-training/ (6 skills ✓ - Megatron-Core, DeepSpeed, FSDP, Accelerate, Lightning, Ray Train)

├── 09-infrastructure/ (3 skills ✓ - Modal, SkyPilot, Lambda Labs)

├── 10-optimization/ (6 skills ✓ - Flash Attention, bitsandbytes, GPTQ, AWQ, HQQ, GGUF)

├── 11-evaluation/ (3 skills ✓ - lm-evaluation-harness, BigCode, NeMo Evaluator)

├── 12-inference-serving/ (4 skills ✓ - vLLM, TensorRT-LLM, llama.cpp, SGLang)

├── 13-mlops/ (3 skills ✓ - Weights & Biases, MLflow, TensorBoard)

├── 14-agents/ (4 skills ✓ - LangChain, LlamaIndex, CrewAI, AutoGPT)

├── 15-rag/ (5 skills ✓ - Chroma, FAISS, Sentence Transformers, Pinecone, Qdrant)

├── 16-prompt-engineering/ (4 skills ✓ - DSPy, Instructor, Guidance, Outlines)

├── 17-observability/ (2 skills ✓ - LangSmith, Phoenix)

├── 18-multimodal/ (7 skills ✓ - CLIP, Whisper, LLaVA, Stable Diffusion, SAM, BLIP-2, AudioCraft)

├── 19-emerging-techniques/ (6 skills ✓ - MoE, Model Merging, Long Context, Speculative Decoding, Distillation, Pruning)

├── 20-ml-paper-writing/ (1 skill ✓ - ML Paper Writing with LaTeX templates)

├── 21-research-ideation/ (2 skills ✓ - Research Brainstorming, Creative Thinking)

└── packages/ai-research-skills/ (npm package for one-command installation)

"I need to fine-tune Llama 3 with custom data" → 03-fine-tuning/axolotl/ - YAML configs, 100+ model support

"How do I optimize inference latency?" → 12-inference-serving/vllm/ - PagedAttention, batching

"I want to learn how transformers work" → 01-model-architecture/litgpt/ - Clean implementations

"We need to scale training to 100 GPUs" → 08-distributed-training/deepspeed/ - ZeRO stages, 3D parallelism

MIT License - See LICENSE for details.

Note: Individual skills may reference libraries with different licenses. Please check each project's license before use.

Built with:

Special thanks to:

We welcome contributions from the AI research community! See CONTRIBUTING.md for detailed guidelines on:

All contributors are featured in our Contributors Hall of Fame 🌟

February 2026 - v0.15.0 🛡️ Prompt Guard & 83 Skills

January 2026 - v0.14.0 📦 npm Package & 82 Skills

npx @orchestra-research/ai-research-skills - One-command installation for all coding agentsJanuary 2026 - v0.13.0 📝 ML Paper Writing & Demos Gallery

demos/) showcasing skills in actionJanuary 2026 - v0.12.0 📊 NeMo Evaluator SDK

December 2025 - v0.11.0 🔬 Mechanistic Interpretability

November 25, 2025 - v0.10.0 🎉 70 Skills Complete!

November 25, 2025 - v0.9.0

November 25, 2025 - v0.8.0

November 25, 2025 - v0.7.0

November 15, 2025 - v0.6.0

November 12, 2025 - v0.5.0

November 9, 2025 - v0.4.0

November 8, 2025 - v0.3.0

November 6, 2025 - v0.2.0

November 3, 2025 - v0.1.0

Join our community to stay updated, ask questions, and connect with other AI researchers:

Install via CLI

npx mdskills install Orchestra-Research/ai-research-skillsAI Research Engineering Skills Library is a free, open-source AI agent skill. View All 21 Categories - Our Mission - Path Towards AI Research Agent - Available AI Research Engineering Skills - Skill Structure - Repository Structure - Use Cases - Contributing - Community We provide the layer of Engineering Ability that enable your coding agent to write and conduct AI research experiments, including preparing datasets, executing training pipelines, deploying models, and build

Install AI Research Engineering Skills Library with a single command:

npx mdskills install Orchestra-Research/ai-research-skillsThis downloads the skill files into your project and your AI agent picks them up automatically.

AI Research Engineering Skills Library works with Claude Code, Claude Desktop, Cursor, Vscode Copilot, Windsurf, Continue Dev, Codex, Gemini Cli, Amp, Roo Code, Goose, Opencode, Trae, Qodo, Command Code, Factory. Skills use the open SKILL.md format which is compatible with any AI coding agent that reads markdown instructions.